The Spec Is the Program, Rust Is Just the Compilation Target

TABLE OF CONTENTS

Need Help?

Our team of experts is ready to assist you with your integration.

I recently wrote about how AI changed the way I think, not just how fast I code. A lot of people reached out asking what my actual workflow looks like. Fair question.

Rather than describe it abstractly, I want to walk through a real project: IncidentBench, a Kubernetes-native resilience benchmark for log analytics platforms. Five Rust crates, a K8s operator with custom resources, gRPC coordination, Kafka integration, a CLI with a live terminal UI, and an HTML report generator. All built with AI assistance.

But here’s the part that might surprise you. The code generation was the mechanical part. The real work, and where AI made the biggest difference, was in writing the spec.

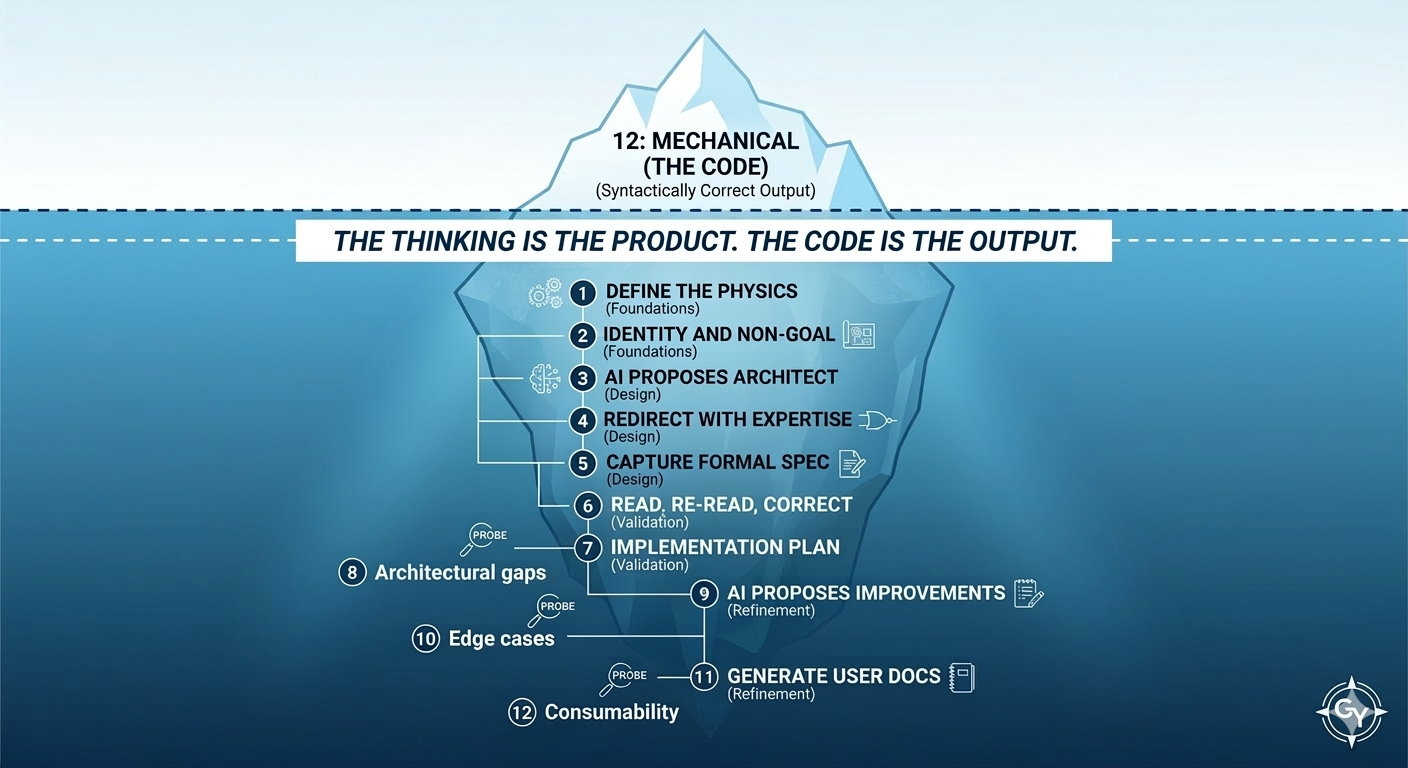

The Workflow, in One Sentence

Use AI to think bigger and define what to build. Then let the type system and compiler turn that spec into a working system.

Most people talking about AI-assisted coding focus on the last mile: generating functions, writing tests, autocompleting boilerplate. That’s useful, but it’s the least interesting part. The real leverage is upstream, in the exploration and specification phase, where you’re deciding what to build and why.

Here’s the actual sequence I followed to build IncidentBench. This isn’t a set of abstract principles. It’s the specific steps, in order, with the reasoning behind each one.

Step 1: Define the Physics of the Problem

I didn’t start by describing what I wanted to build. I started by describing how the world works.

My opening prompt to AI was about the environment, not the solution:

The modern observability and security analytics environment looks like this: logs spike unpredictably, queries spike during incidents, teams share clusters, data volumes are unbounded, object storage is the durable layer, compute must scale elastically. Traditional systems break at the intersection of ingestion spike, query spike, rebalancing, and shared state. However, there are no benchmarks that model this real problem.

Notice what’s not in this prompt. No mention of Rust. No mention of Kubernetes. No mention of what the benchmark should look like. Just the physics of the problem and the gap.

This matters because it forces AI to reason about the problem space before jumping to implementation. If you start with “build me a K8s operator that benchmarks log platforms,” you get a K8s operator. If you start with how the world actually works, you get a conversation about what would actually solve the problem.

Step 2: Define the Identity and Non-Goals

After AI summarized the high-level requirements, my next move was to constrain what this thing is and what it isn’t, before any design work started:

This is not a steady-state throughput benchmark. It is a resilience benchmark. Under spiky ingestion and spiky queries: queries stay stable, ingestion keeps up, no cascading failure, recovery is fast.

That single sentence, “this is not a throughput benchmark, it is a resilience benchmark,” probably prevented dozens of wrong turns in the spec. Every time AI might have drifted toward measuring peak QPS or max ingest rate, that identity constraint pulls it back.

Non-goals are as powerful as goals. They tell AI where to stop. Without them, you get scope creep and gold-plating in everything that follows.

Step 3: Let AI Propose an Initial Architecture

At this point, I let AI take the first swing. It proposed a system architecture: a single binary workload generator that would use OpenSearch bulk APIs to push data and run queries. Reasonable. Conventional. And not what I wanted.

But this step is important. You need AI to propose something concrete so you have something specific to react to. A blank canvas is harder to correct than a wrong answer.

Step 4: Redirect with Domain Expertise

This is the critical step that separates useful AI-assisted design from generic output. I didn’t say “make it better.” I made specific corrections with specific rationale:

Rust, not Go or Python.

Because the benchmark itself cannot be the bottleneck when measuring a system’s capabilities under stress. No garbage collector means no latency jitter that gets misattributed to the target platform. This is a performance requirement that drives the language choice.

Kubernetes operator with CRDs, not a single binary.

Because a single binary can’t generate enough load to stress a production system. It needs to auto-scale. And the scenario definition should mirror the entities of the physical world we’re simulating, not implementation details of the benchmark or the system under test. CRDs give you declarative scenario definitions that read like a description of the incident, not like benchmark configuration. This is an ergonomics requirement that drives the execution model.

Kafka as the ingestion path, not direct API calls.

Because that’s how the real world works. Logs flow through a message bus in production. Pushing directly to an API would be testing a scenario that doesn’t exist in practice. It also makes the benchmark applicable beyond any single platform. This is a realism requirement that drives the ingestion architecture.

Ensemble workloads, not single streams.

Because a real incident isn’t one data stream and one query stream. It’s multiple services generating logs at different rates while multiple engineers run different types of queries. Each scales independently. This is a fidelity requirement that drives the scenario model.

Each correction carries a why. AI can absorb rationale and apply it consistently across subsequent decisions. “Use Kafka because it models real production pipelines” doesn’t just fix the ingestion path. It establishes a design principle (model reality) that AI applies to every subsequent choice.

Note the meta-observation here: in the past, I would have reduced the scope of this project substantially because the design and implementation would have taken too long. I would have built the single-binary version and called it good enough. AI let me keep the full ambition of the architecture.

Step 5: Capture to a Formal Document

After several rounds of conversation, I asked AI to capture everything as a formal design document. This is a critical transition. Reading a spec on paper is a fundamentally different mode than participating in a conversation. Things that feel fine in the flow of discussion don’t always hold up when you read them cold.

The document became the object of review. I was no longer co-authoring in a conversational back-and-forth. I was reviewing a specification, the same way I’d review a design doc from an engineer on my team.

Step 6: Read, Re-read, Correct

I made multiple passes over the document, correcting style, content, and requirements. This is the most old-fashioned step in the entire workflow. You read. You think. You find the things that don’t sit right. There’s no shortcut here. AI wrote the document, but the judgment about whether it’s correct is yours.

Step 7: Ask for a Phased Implementation Plan

Once the spec felt solid, I asked AI to propose a phased implementation approach with detailed tasks, tests, and file layout for the project.

This step serves a dual purpose that’s easy to miss. The implementation plan is useful on its own, but it’s also a stress test of the spec. If AI can’t produce a coherent task breakdown with clear tests and a well-organized file layout, the spec has gaps. The implementation plan is a probe that reveals ambiguity you can’t see by reading the spec alone.

Step 8: Fix Gaps, Regenerate the Plan

The implementation plan exposed a glaring gap: there was no way to test the parts of the system without engaging the full functionality. You can’t run a meaningful test of the operator without a K8s cluster, Kafka, and a target platform all running. This is when I decided to add a dry-run feature.

I didn’t find this gap by reading the spec. I found it because the implementation plan forced AI to think about how each component would be tested, and it couldn’t produce a coherent answer. The plan was the probe. The dry-run feature was the fix. The fix went back into the spec. Then the plan was regenerated.

This loop (plan → gap → fix spec → regenerate plan) is one of the most valuable parts of the workflow. You’re using AI to simulate the implementation in order to find spec deficiencies, all before any code exists.

Step 9: Ask AI to Propose Improvements

With the spec and plan aligned, I asked AI what else should be added to complete the spec and improve usability. It suggested improvements to error reporting and additional edge case handling that I approved.

This is a different mode than Step 4. In Step 4, you’re correcting AI with your domain expertise. In Step 9, you’re inviting AI to apply its breadth across everything it’s seen in other systems. AI is good at finding the edge cases and usability gaps that you’re too close to the problem to see.

Step 10: Final Consistency Check

One full-document audit with AI. Does Section 3’s scenario model still align with Section 10’s implementation notes after all the changes? Do the CRD fields in Section 2 match the CLI flags in Section 8? Do the valid-run criteria in Section 6 reference metrics that are actually collected in Section 5?

In a spec this large (IncidentBench’s spec is approximately 90KB), internal contradictions accumulate silently. Humans are bad at catching them. AI is good at it when you explicitly ask.

Step 11: Generate User Documentation from the Spec

Before generating any code, I asked AI to produce user-facing documentation: a README with architecture overview, getting started guide, configuration reference, result interpretation, and make targets. All derived from the spec.

This step is another probe, and it’s one most people would never think to do. You’re validating consumability before implementation. If the documentation doesn’t make sense to a user, the spec has problems. If the getting started workflow is clunky, the architecture needs adjustment. If the configuration reference is confusing, the CRD schema needs simplification.

The README I generated reads like documentation for a mature, shipped project — complete with scorecard interpretation, configuration examples, and troubleshooting guidance. All of that existed before a single line of Rust was compiled. And if any of it had read wrong, I would have fixed the spec, not the code.

Step 12: Mechanical Code Generation

With the spec validated from three different angles, code generation was straightforward. I asked AI to proceed phase by phase (matching the implementation plan from Step 7), compiling as it went and running unit tests after each phase.

This part was genuinely mechanical. AI hit compiler errors and fixed them. Tests failed and AI fixed them. I didn’t intervene. The spec and Rust’s type system had already made all the architectural decisions. AI wasn’t inventing anything. It was translating from a thoroughly validated English specification into Rust, and the compiler verified the translation.

Three Probes Before Any Code

Looking back at this workflow, there are three distinct probes that validate the spec from different angles before code generation begins:

The implementation plan (Step 7)

Probes for architectural gaps. Can this actually be built in phases? Can each component be tested? This is where the dry-run feature was discovered.

AI-proposed improvements (Step 9)

Probe for edge cases and robustness. What error conditions are unhandled? What usability issues exist? This catches the things you’re too close to the problem to see.

User documentation (Step 11)

Probes for consumability. Does this make sense to someone who wasn’t in the design conversation? Is the workflow ergonomic? Is the output interpretable?

Each probe looks at the spec from a fundamentally different angle: implementation feasibility, robustness, and user experience. Running all three before writing code means you catch categories of problems that would otherwise surface as bugs, refactors, or user complaints after the system is built.

Anatomy of a Spec That Makes Code Generation Mechanical

Not all specs are created equal. A loose requirements document won’t make code generation work. Here’s what the IncidentBench spec contains that made the translation to Rust deterministic:

Exact type contracts.

The CRD schema specifies every field, every enum value, every status transition. The adapter trait defines exact function signatures with input types and return types. The protobuf definitions specify every message shape. When AI generates code against these, there’s almost no room for interpretation.

Fully enumerated state machines.

The operator lifecycle is a linear state machine: Pending, Preparing, Initializing, Running, Aggregating, Reporting, Completed, with every state able to transition to Failed. The phase barrier protocol is another state machine with explicit transitions and conditions. AI doesn’t need to reason about state management when the states and edges are already fully specified.

Concrete sequences with dependency ordering.

The Mach5 adapter’s prepare sequence specifies four numbered steps with exact API paths, exact JSON payloads, exact dependency ordering (Step 3 depends on Steps 1 and 2, Step 4 is independent of Step 3), and exact parallelization opportunities. The cleanup sequence is the reverse, with explicit idempotency requirements (a 404 response is treated as success).

Failure modes as first-class elements.

Valid run criteria are specified with exact thresholds: baseline p99 latency must remain under 5,000 milliseconds, achieved EPS must reach at least 90% of target, and at least 500 queries must execute for statistical significance. Separately, the recovery metric is defined precisely: query p99 returning to within 1.2x of baseline determines the recovery_time_s scorecard value. Harness saturation detection specifies the exact trigger: any worker exceeding 90% CPU. These are the edge cases that AI would otherwise hallucinate or silently ignore.

Design rationale alongside every decision.

Rust because no garbage collector means no latency jitter misattributed to the target. Kafka because it models real production pipelines and provides consumer lag as a natural backlog metric. T-digest for latency aggregation because it has bounded error. Rationale constrains AI from making “creative” alternative choices that break the architecture.

Concrete numeric examples everywhere.

The rate distribution table shows exactly what 50,000 events per second across 10 workers looks like in each phase. The schema has exact probability distributions for every field in every mode. The total event count is computed: approximately 13.5 million events. When AI has the numbers, it doesn’t estimate or approximate.

Ergonomics and UX specified, not deferred.

The CLI specifies every command and flag. The user workflow is four steps: apply, watch, report, delete. The live terminal UI specifies what it renders. The output directory structure is specified file by file. These aren’t details for “later.” They’re part of the spec because they affect the shape of the generated code.

Hard scope boundaries.

“v0.1 does not include comparison reporting.” “Custom phase definitions are a future extension.” “IncidentBench is not a throughput benchmark.” Every fence tells AI where to stop.

The repo structure is the architecture.

The spec defines the exact crate layout, file names, and module responsibilities. AI doesn’t need to decide where phase_controller.rs lives or whether the adapter trait belongs in the common crate or a separate one.

Testability is built in from the start.

Scaling flags exist so you can run a smoke test on a laptop. Success criteria are fourteen specific, verifiable assertions. Deterministic data generation means runs are reproducible.

The Role of the Type System

A spec this detailed still needs enforcement. AI-generated code can drift from the spec in subtle ways: a field gets the wrong type, a state transition gets skipped, an error path gets swallowed.

Rust’s type system, traits, and compiler catch this. They enforce correctness regardless of who or what wrote the code. When you combine a rigorous spec with a strong type system, AI code generation stops being a trust exercise and starts being an engineering process. You get the speed without giving up the rigor.

The spec defines the architecture. The types enforce it. The compiler verifies it. AI fills in the implementation. That’s the workflow.

What Got Built

IncidentBench exists because nobody benchmarks the moment that actually matters in production: when ingestion spikes and query spikes overlap simultaneously.

During a real outage, a cascading failure can spike log volume 10 to 50x while multiple engineers are simultaneously hammering the platform with investigative queries. Existing benchmarks measure throughput in isolation. Max ingest rate. Peak QPS. Storage efficiency. Those are useful numbers, but they tell you nothing about whether your platform survives the overlap.

IncidentBench fills that gap. It simulates a realistic six-phase incident lifecycle (baseline, incident trigger, ingestion surge, overlap, recovery, post-incident) and measures four properties that actually determine whether a platform holds up: stability (do query latencies hold under load?), isolation (does querying degrade ingestion or vice versa?), predictability (are tail latencies bounded?), and recovery (how fast does the system return to baseline?).

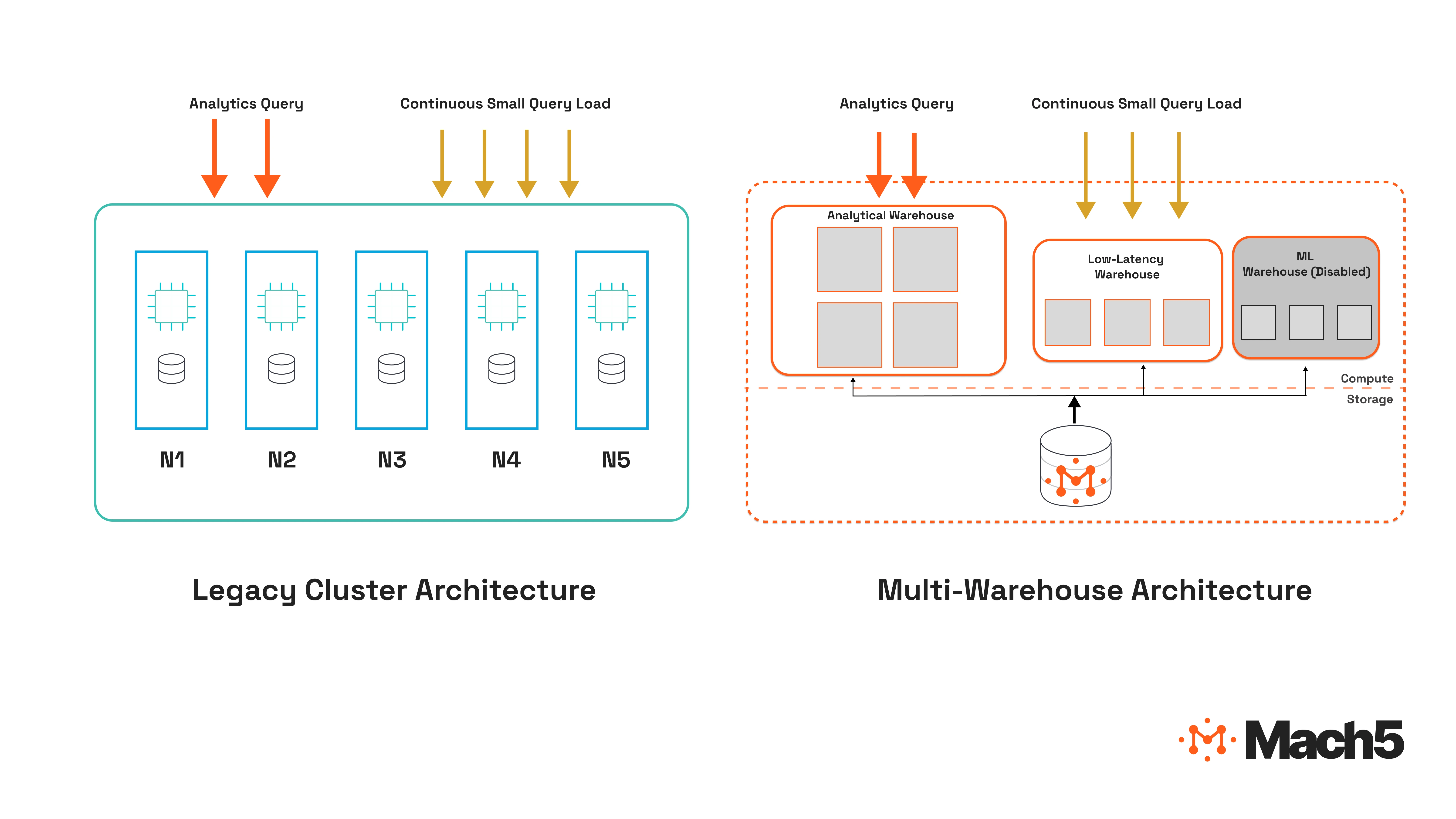

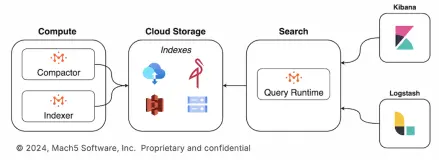

The architecture is pluggable. You can point it at any log analytics platform by implementing an adapter trait. The first adapter is for Mach5, and concepts like multi-warehouse mode (benchmarking different analyst personas hitting separate warehouses during peak load) surface naturally because workload isolation is a core primitive in how Mach5 is architected. If your platform has real query isolation, the benchmark reveals it. If it doesn’t, the benchmark reveals that too.

IncidentBench is open source under Apache 2.0. Full details and the GitHub link are at mach5.io/incidentbench.

Step 13: Code Review as Alignment

The workflow doesn’t end when the code compiles and tests pass. There’s a review step, and it matters for a reason that has nothing to do with finding bugs.

In a traditional engineering team, code review serves two purposes. The immediate purpose is catching issues. The deeper purpose is building a shared set of intangible values between developers: how you name things, how you handle errors, when you abstract and when you inline, what “clean” means in this codebase. Over time, a well-functioning review process means code converges toward a shared standard without anyone writing a style guide.

I’ve been doing the same thing with AI-generated code. Early on, I read every line. Partly to find issues, but mostly out of curiosity about how AI chooses to structure things. What patterns does it reach for? Where does it over-abstract? Where does it copy-paste when it should extract a function?

That initial deep reading was valuable because it taught me what to specify. I started adding guidelines to the spec: never panic unless it’s a genuine bug (use Result types for expected failures), stay DRY (reuse existing functions rather than reimplementing similar logic elsewhere in the codebase), and other conventions that I kept discovering through review. Each guideline is feedback from review into the specification process, exactly the way code review feedback between human developers gradually raises the bar for the whole team.

But reading every line doesn’t scale. Once you’re generating tens of thousands of lines, you need a different approach. I’ve settled on two types of spot checks, both using AI as a search engine into its own output.

Targeted queries.

I ask AI to show me specific things I know the code must handle correctly. “Show me the logic of how input data is parsed by the system.” “Show me how the operator handles a worker pod that crashes mid-run.” “Show me every place where a Kafka produce failure is handled.” This is the equivalent of a reviewer going straight to the parts of a PR that they know are tricky, rather than reading the whole diff top to bottom.

Trace analysis.

I create a concrete scenario the software should handle and ask AI to trace the path through the code. “Walk me through what happens when an IncidentBenchRun CR is applied with dry-run set to true, from the moment the operator sees it to the output.” This is more thorough than targeted queries because it crosses module and crate boundaries. It reveals whether the pieces actually fit together the way the spec says they should.

Both techniques use AI to build alignment with the codebase it generated. You’re not auditing every line. You’re building a mental model of the code’s shape and checking it against the spec. When you find a mismatch, you have a choice: fix the code, or (more often) fix the spec so the next round of generation gets it right.

This is the feedback loop that makes the whole workflow improve over time. Every review cycle tightens the spec. A tighter spec produces code that needs fewer corrections. Fewer corrections mean the review can focus on higher-level concerns rather than catching the same class of issue repeatedly. It’s the same dynamic that makes a good engineering team get faster over time, except the “team” is you and AI, and the institutional memory lives in the spec.

The Takeaway

The conventional framing of AI-assisted coding is: AI writes code faster. That’s true but uninteresting. The bigger shift is that AI lets you be far more ambitious with the specification. You can explore more thoroughly, define more rigorously, and pressure-test more edge cases before a single line of implementation exists.

The thirteen steps above aren’t a rigid methodology. They’re a sequence that emerged from building a real system. The core principle is simple: invest your time in the spec, use probes to validate it from multiple angles, let code generation be mechanical, and feed what you learn in review back into the spec so the next cycle is tighter.

The thinking is the product. The code is the output.